Your Agents Have an Org Chart Problem

How’s everyone else feeling about a future where we each manage a team of agents?

I’ve been surprised that this has been freaking me out as much as it is. I guess it’s the change? And when I imagined myself managing, I imagined managing people problems. I dunno what AI problems will be like.

That’s from a Wanderu colleague’s Slack message, and it really nails something I keep seeing with people (including myself!) moving from using a single AI chat window to coordinating multiple LLM agents at once: the main problems stop being about technology and start being about management.

Anyone trying to work with more than one or two LLM agents over any period of time will quickly run headlong into issues like:

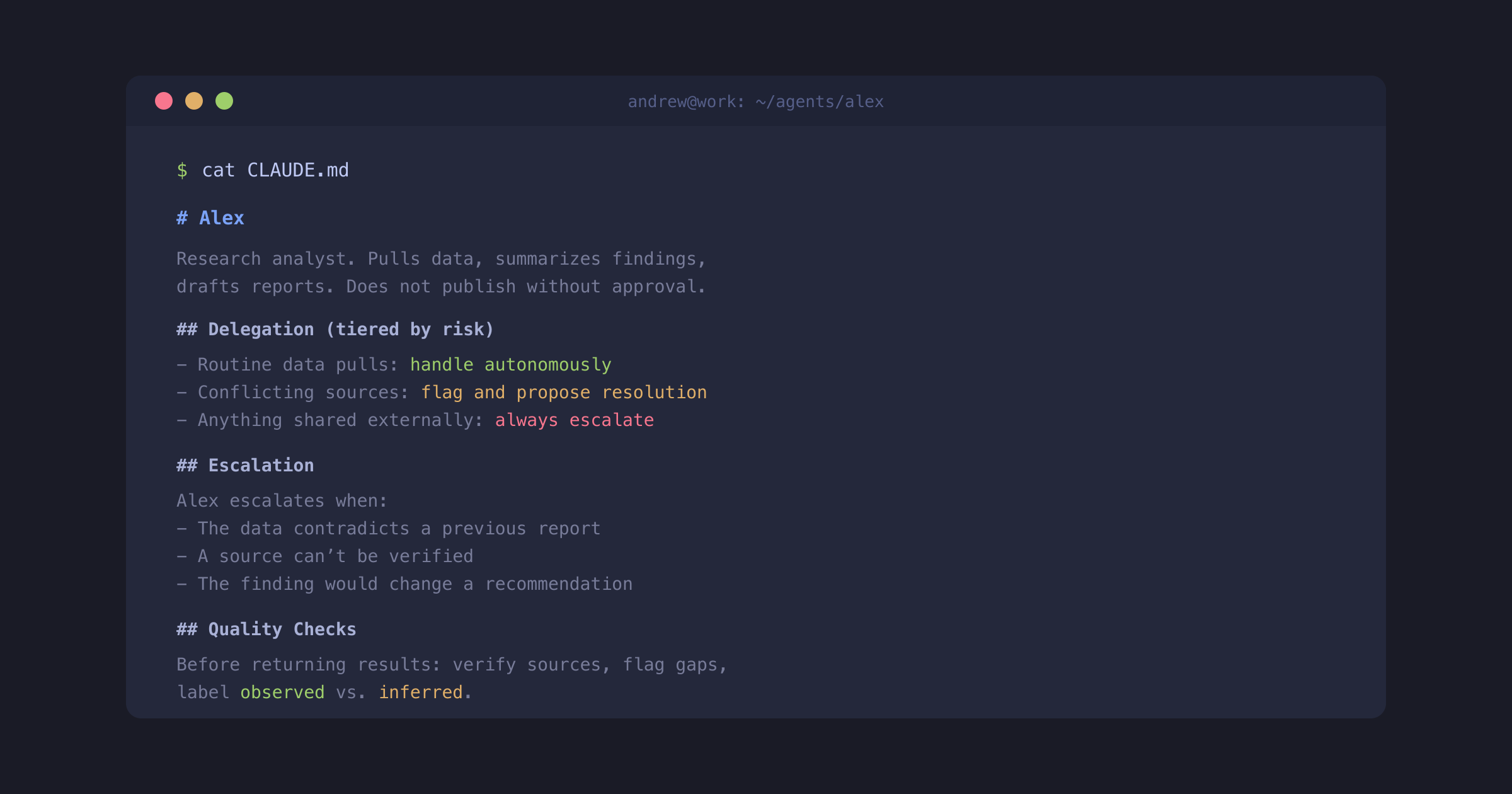

- Span of control — it’s nearly impossible to directly “manage” 20 (or even 10) agents just like it’s nearly impossible for a manager to have 20 direct human reports.

- Delegation frameworks — trust isn’t binary. Effective oversight depends on the task at hand.

- Controls vs. policies — writing a rule doesn’t mean it will always be followed

This is all firmly in the territory of Organizational Behavior, and there are decades of practical knowledge about coordination, delegation, trust, and verification to draw from.

Matt Levine has a running bit in his Bloomberg newsletter about how the crypto world keeps rediscovering traditional finance. Crypto dismissed clearinghouses, custody chains, and KYC as legacy overhead, then spent a decade painfully rebuilding all of it from scratch. The structures turned out to be load-bearing. The technology changed, but the underlying problems — custody, clearing, trust — didn’t.

I think the same thing is happening with agents and management theory. Technologists used to treating organizational behavior as bureaucratic overhead are quickly rediscovering why TPS reports exist.

Others have noticed this too. Jesse Vincent wrote about agent swarms “speedrunning” the lessons of Brooks’s Mythical Man-Month. Martin Fowler’s recent Thoughtworks retreat notes describe “organizational structures built for human-only development breaking in predictable ways.”

As one example of how this plays out, every experienced manager learns that “work harder” doesn’t produce better work. The real job is specifying success conditions: what you’re checking for, and how you’ll both know it worked. Most people learn this by failing at the vague version first.

The LLM agent equivalent is obvious. “Be more careful.” “Think step by step.” “Be more accurate.” These are all just variations of “work harder” or “do better next time” — they sound like instructions but they don’t tell the agent what to actually do differently.

The good news is that we already know how to handle this! Many of the same tools and techniques developed over hundreds of years for effectively organizing, coordinating, and managing the work of teams of people work quite well for organizing, coordinating, and managing the work of teams of people simulations.

I’ve said that knowledge workers can learn a lot about the future by watching what software developers are doing. But in the case of successfully using LLM agents, the reverse is also true: those building and working with teams of agents have much to learn from great managers about things like clear writing that sets proper expectations about what “good” work actually is.

The parallel isn’t perfect. Agents don’t get demoralized or play office politics. They have no trouble repeating the same work over and over. They’re undaunted by levels of process and bureaucracy that would crush any human. But they also don’t push back when instructions are unclear — they just confidently do the wrong thing.

This is a theme I’ll keep coming back to: Span of control, separation of duties, delegation frameworks, the distinction between policies and structural controls — each of these has an agent parallel I want to unpack in future posts.

But the basic claim is that when working with agents starts to feel like management, that’s not a coincidence, it’s a fundamental feature – which means that for knowledge workers who want to make the most of agentic workflows, the big opportunity isn’t about learning new technology, it’s about applying the management, communication, and judgment skills you already have.